Selected Publications & Research

My work focuses on extracting actionable insights from large, complex datasets — ranging from the evolution of the universe to real-world healthcare outcomes. With a background in theoretical physics and computational modelling, I specialise in building scalable software tools and applying advanced statistical methods to bridge the gap between theoretical models and messy, high-dimensional data.

My expertise spans Machine Learning, simulations, and Real-World Evidence. Whether optimising precision medicine protocols in Biotech or medelling large-scale structures in Cosmology, my goal is to turn massive data streams into evidence-based decisions.

Clinical Computer Vision & Assay Automation

Developing deep learning workflows to automate the identification of rare circulating tumour cells, replacing manual interpretation with reproducible, genomically-validated AI classification.

- Industry Impact: Streamlined a time-consuming clinical workflow by automating the identification of Multiple Myeloma cells. By using DEPArray for genomic validation, I ensured the “Ground Truth” for the AI was biologically absolute — a critical requirement for Precision Medicine and Regulated Diagnostics (RUO/IVD).

- Key Skills: Computer Vision, Ground Truth Engineering, Rare Cell Detection, Clinical Workflow Optimisation.

- Tools: [Tensorflow] [Python] [Keras] [Deep Learning] [Digital Pathology] [Image Processing]

Spatiotemporal Data Integrity & Large-Scale Simulations

Advanced modelling of large-scale systems and complex dynamical networks. I apply fluid dynamics analogues and spatiotemporal analysis to build robust 'Digital Twins' for urban environments, logistics, and precision agriculture.

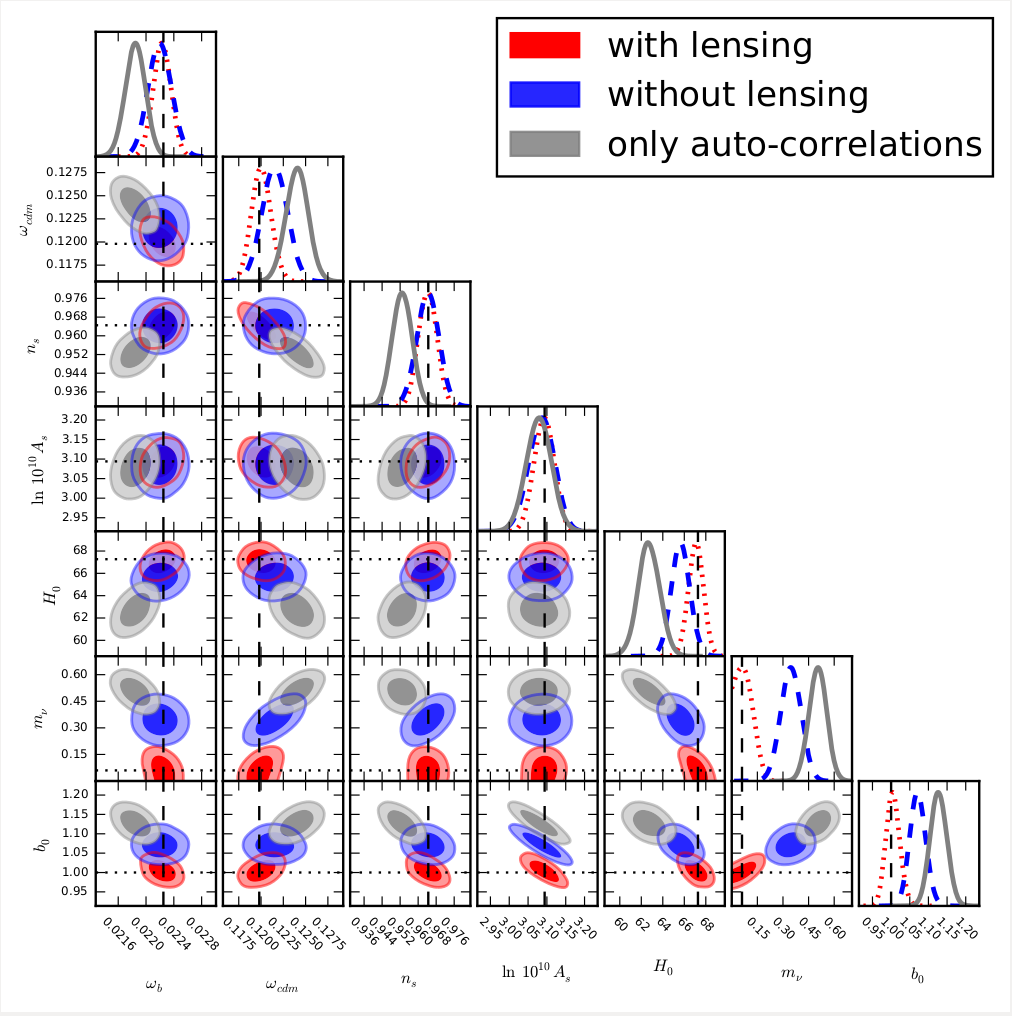

- Industry Impact: Demonstrated that ignoring “inter-layer” interference (lensing) leads to significant errors in high-stakes parameter estimation. This methodology is directly transferable to Smart Farming (e.g., correcting satellite signal interference for crop yield) and Smart Cities (e.g., isolating variables in complex urban traffic networks).

- Key Skills: Bias Mitigation, High-Dimensional Parameter Estimation, Cross-Correlation Analysis, Error Propagation.

- Tools: [Bayesian Statistics] [Monte Carlo Markov Chain (MCMC)] [Python/C++] [Simulations]

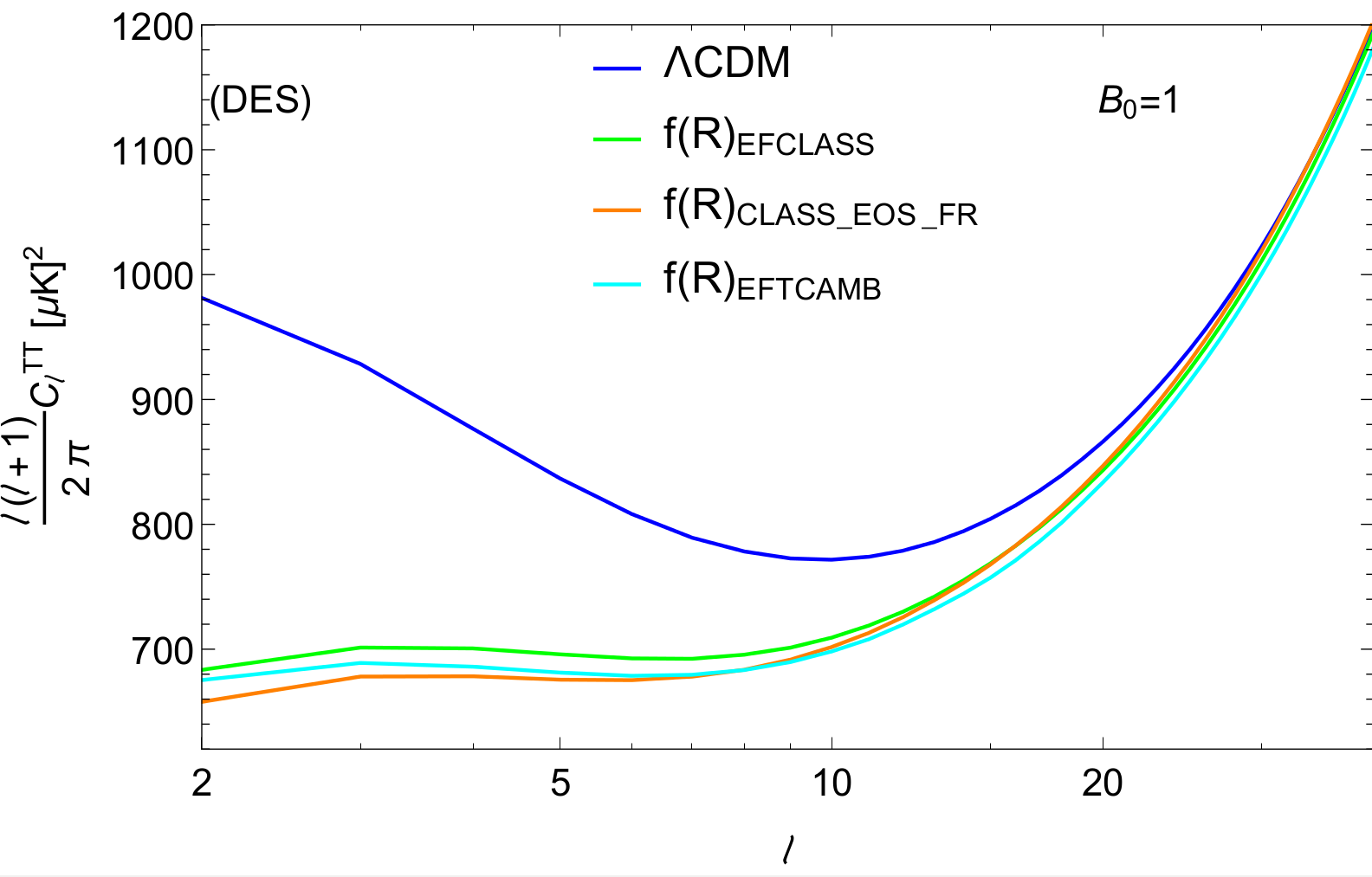

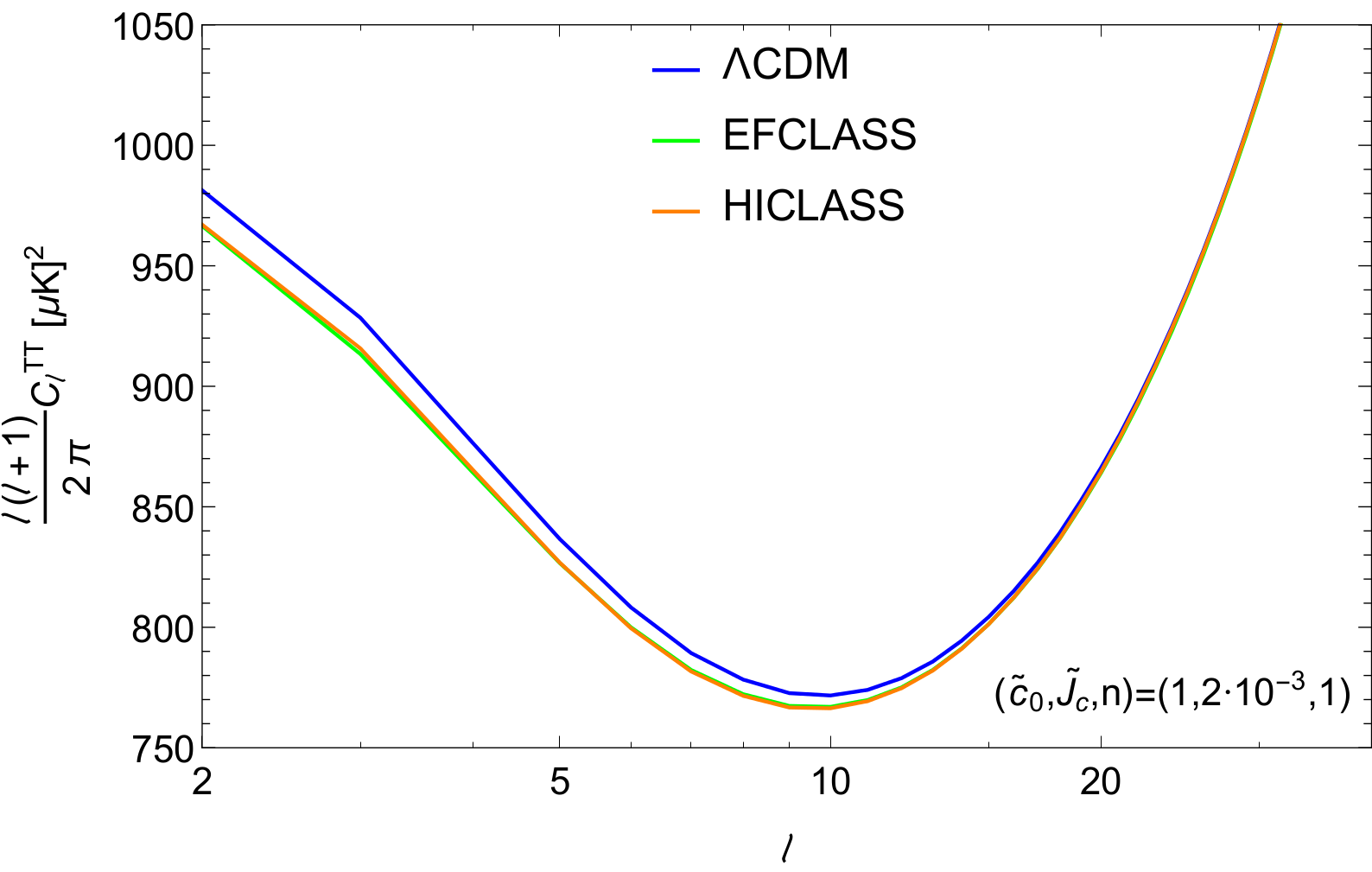

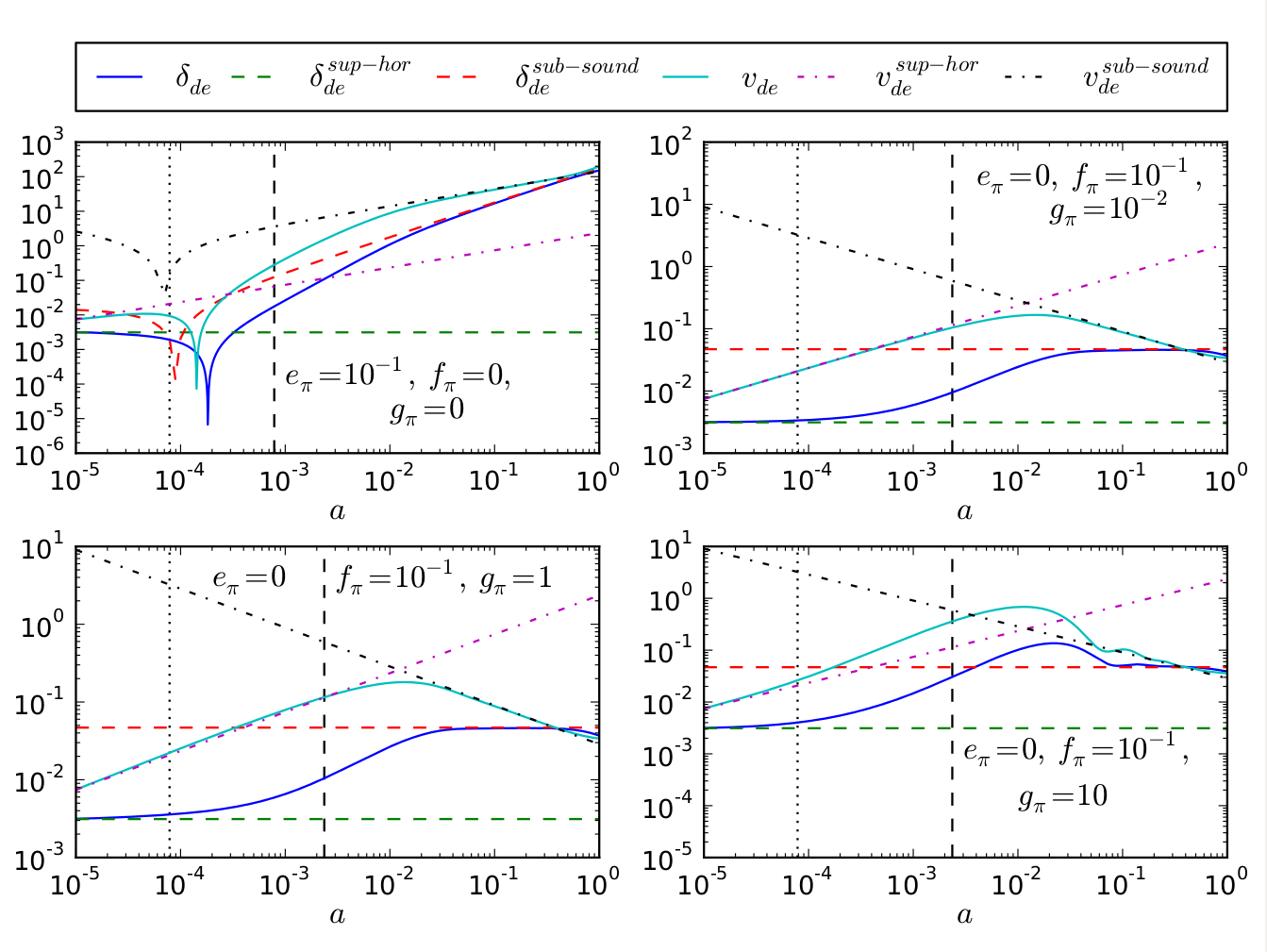

- Industry Impact: Engineered an “Effective Fluid” approach to simplify high-dimensional physical systems without losing predictive accuracy. This technique is highly applicable to Smart Cities (modelling urban traffic or energy as a fluid) and Supply Chain Logistics (optimising the flow of goods through complex networks).

- Key Skills: Dimensionality Reduction, Complex System Optimisation, Fluid Dynamics Analogs.

- Tools: [Numerical Modelling] [System Dynamics] [Analytical Approximation]

Explainable AI & Automated Rule Discovery

Transforming high-dimensional data into transparent, actionable insights using Symbolic Regression and Bayesian Inference. I specialise in model compression and uncertainty quantification for high-stakes decision-making in Public Policy and Social Sciences.

- Industry Impact: Replaced complex, computationally expensive models with a simplified, “human-readable” formula that improved accuracy by 10% while significantly reducing complexity. This approach is vital for Public Policy and Smart Cities, where model transparency is required for regulatory compliance and stakeholder trust.

- Key Skills: Symbolic Regression, Genetic Algorithms, Model Compression, Explainable AI (XAI).

- Tools: [Python] [Genetic Programming] [Symbolic Regression] [Boltzmann Solvers]

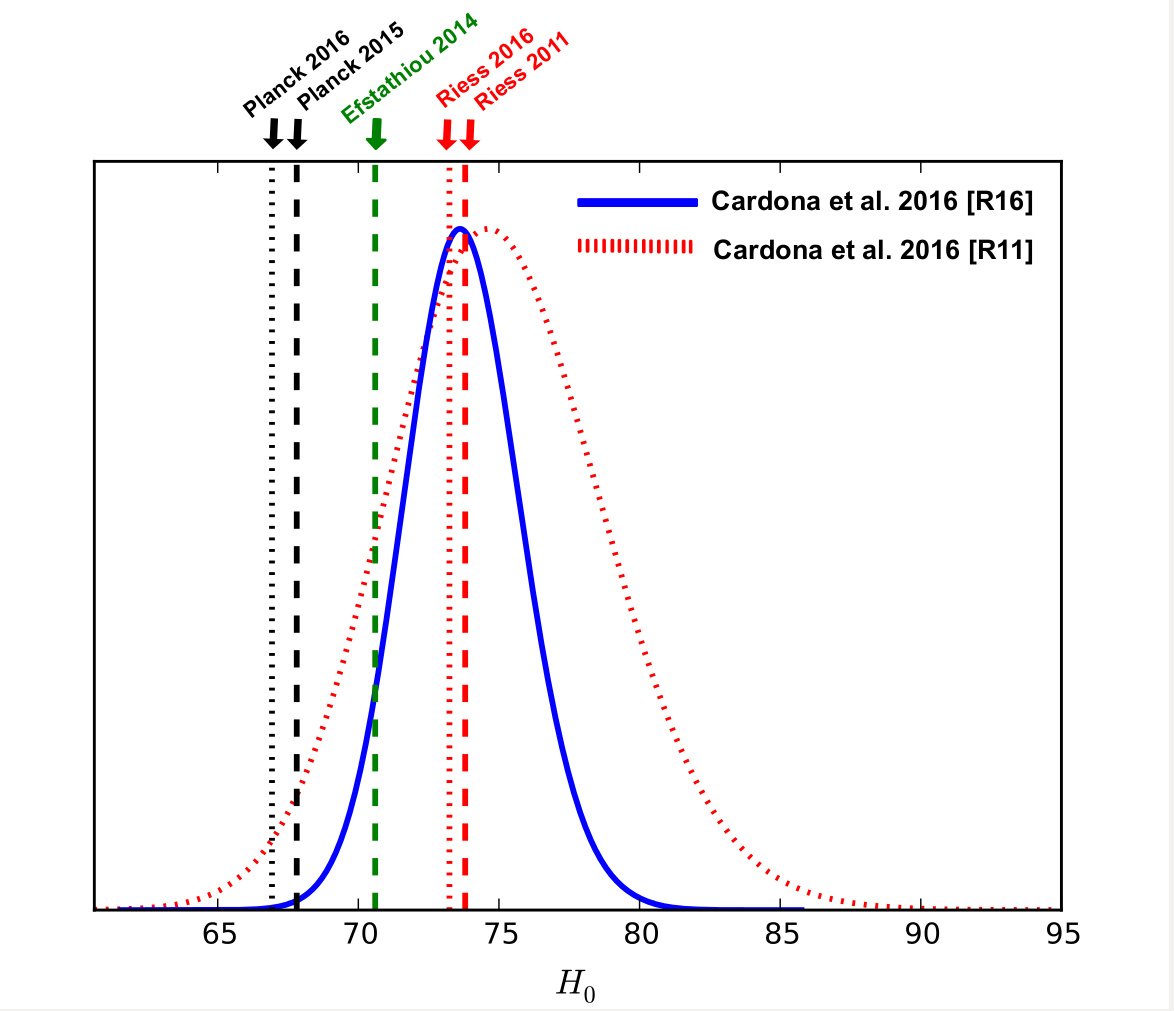

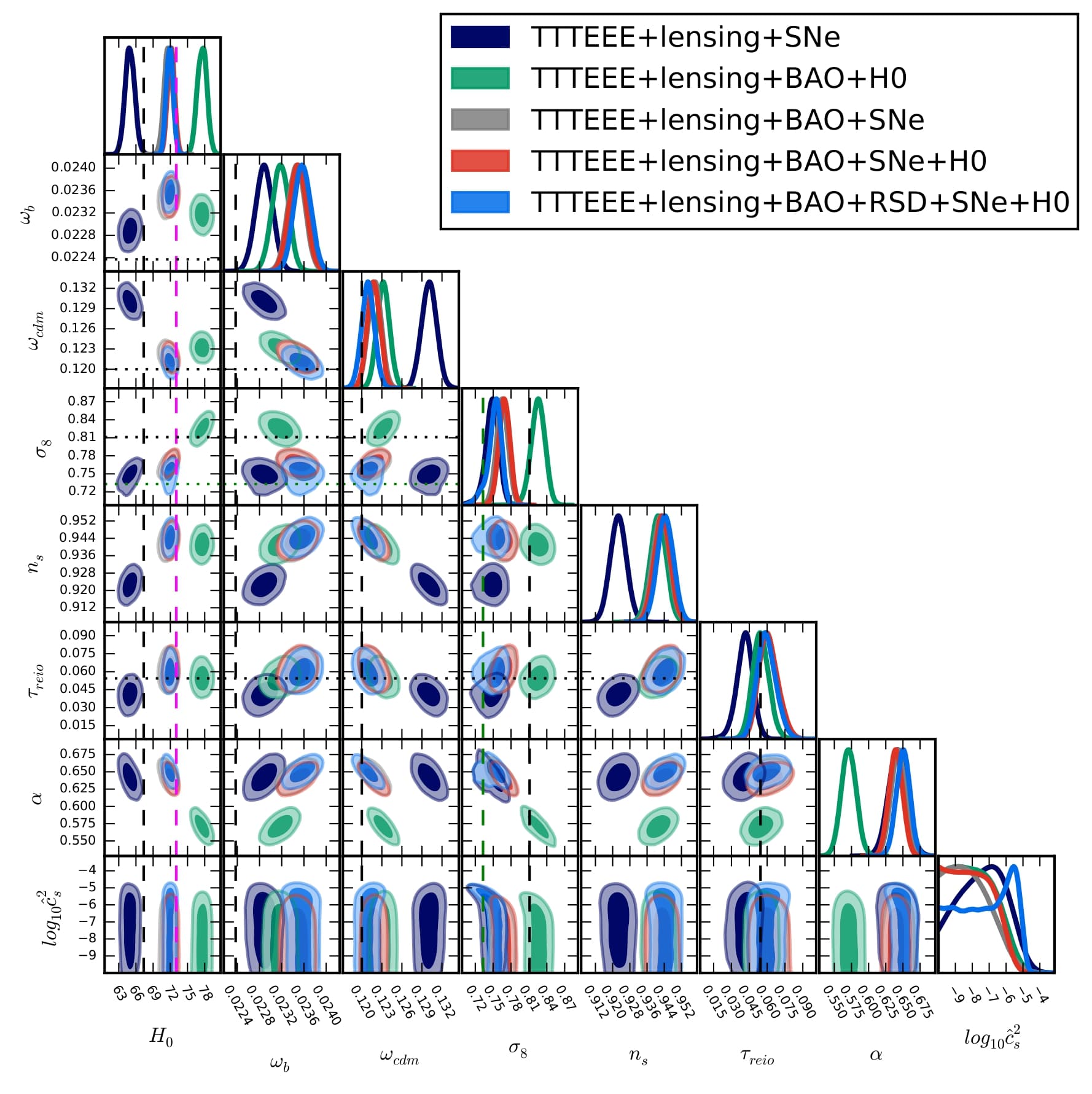

- Industry Impact: Developed a Bayesian framework to reconcile discrepant data sources (the H0 tension). This methodology is crucial for Public Policy and Strategic Planning, where decision-makers must weight conflicting reports from different regions or agencies to arrive at a single, reliable “Truth”.

- Key Skills: Bayesian Hyper-parameter weighting, Statistical Data Fusion, Conflict Resolution in Data.

- Tools: [Bayesian Inference] [MCMC] [Python]

Scientific Background & Full Academic Records

Over the past years I have worked on several aspects of cosmology and astrophysics. In my work I keep a close relation between theory and observations. Theoretical cosmology is one of my main research interests and I have been involved in a few projects by building cosmological models which could explain the reason why the Universe is speeding up. Thus far I have focused on models taking into consideration a possible new kind of matter dubbed dark energy as well as models that modify General Relativity. These alternatives to the standard cosmological model could also provide plausible explanations for current tensions in cosmological parameters such as the Hubble constant and the strength of matter clustering. I have computed cosmological constraints for several models by examining their background and first order cosmological perturbations. In doing so I have modified widely used Boltzmann solvers, coded a few programs which carry out statistical analyses, and applied Machine learning techniques for performing symbolic regression in cosmology. I have also worked on novel statistical methods for testing non-Gaussianity in the Cosmic Microwave Background as well as possible unaccounted-for systematic errors in the determination of the Hubble constant. I am also pretty interested in studying the performance of upcoming galaxy surveys and its impact on cosmology. In particular I like examining which new effects will become relevant by carrying out forecasts.

For a complete, chronological list of my 20+ peer-reviewed publications and preprints, please visit:

ORCID | Google Scholar | iNSPIRE | arXiv | ads